When you’re trying to communicate or understand ideas, words don’t always do the trick. Sometimes the more efficient approach is to do a simple sketch of that concept—for example, diagramming a circuit might help make sense of how the system works.

But what if artificial intelligence could help us explore these visualizations? While these systems are typically proficient at creating realistic paintings and cartoonish drawings, many models fail to capture the essence of sketching: its stroke-by-stroke, iterative process, which helps humans brainstorm and edit how they want to represent their ideas.

A new drawing system from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and Stanford University can sketch more like we do. Their method, called “SketchAgent,” uses a multimodal language model—AI systems that train on text and images, like Anthropic’s Claude 3.5 Sonnet—to turn natural language prompts into sketches in a few seconds. For example, it can doodle a house either on its own or through collaboration, drawing with a human or incorporating text-based input to sketch each part separately.

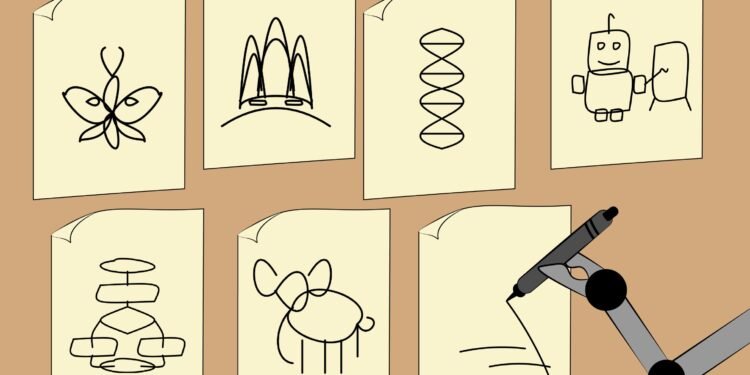

The researchers showed that SketchAgent can create abstract drawings of diverse concepts, like a robot, butterfly, DNA helix, flowchart, and even the Sydney Opera House. One day, the tool could be expanded into an interactive art game that helps teachers and researchers diagram complex concepts or give users a quick drawing lesson.

CSAIL postdoc Yael Vinker, who is the lead author of a paper introducing SketchAgent, notes that the system introduces a more natural way for humans to communicate with AI.

“Not everyone is aware of how much they draw in their daily life. We may draw our thoughts or workshop ideas with sketches,” she says. “Our tool aims to emulate that process, making multimodal language models more useful in helping us visually express ideas.”

SketchAgent teaches these models to draw stroke-by-stroke without training on any data—instead, the researchers developed a “sketching language” in which a sketch is translated into a numbered sequence of strokes on a grid. The system was given an example of how things like a house would be drawn, with each stroke labeled according to what it represented—such as the seventh stroke being a rectangle labeled as a “front door”—to help the model generalize to new concepts.

Vinker wrote the paper alongside three CSAIL affiliates—postdoc Tamar Rott Shaham, undergraduate researcher Alex Zhao, and MIT Professor Antonio Torralba—as well as Stanford University Research Fellow Kristine Zheng and Assistant Professor Judith Ellen Fan. They’ll present their work at the Conference on Computer Vision and Pattern Recognition (CVPR 2025) this month. The paper is available on the arXiv preprint server.

Assessing AI’s sketching abilities

While text-to-image models such as DALL-E 3 can create intriguing drawings, they lack a crucial component of sketching: the spontaneous, creative process where each stroke can impact the overall design. On the other hand, SketchAgent’s drawings are modeled as a sequence of strokes, appearing more natural and fluid, like human sketches.

Prior works have mimicked this process, too, but they trained their models on human-drawn datasets, which are often limited in scale and diversity. SketchAgent uses pre-trained language models instead, which are knowledgeable about many concepts, but don’t know how to sketch. When the researchers taught language models this process, SketchAgent began to sketch diverse concepts it hadn’t explicitly trained on.

Still, Vinker and her colleagues wanted to see if SketchAgent was actively working with humans on the sketching process, or if it was working independently of its drawing partner. The team tested their system in collaboration mode, where a human and a language model work toward drawing a particular concept in tandem. Removing SketchAgent’s contributions revealed that their tool’s strokes were essential to the final drawing. In a drawing of a sailboat, for instance, removing the artificial strokes representing a mast made the overall sketch unrecognizable.

In another experiment, CSAIL and Stanford researchers plugged different multimodal language models into SketchAgent to see which could create the most recognizable sketches. Their default backbone model, Claude 3.5 Sonnet, generated the most human-like vector graphics (essentially text-based files that can be converted into high-resolution images). It outperformed models like GPT-4o and Claude 3 Opus.

“The fact that Claude 3.5 Sonnet outperformed other models like GPT-4o and Claude 3 Opus suggests that this model processes and generates visual-related information differently,” says co-author Tamar Rott Shaham.

She adds that SketchAgent could become a helpful interface for collaborating with AI models beyond standard, text-based communication. “As models advance in understanding and generating other modalities, like sketches, they open up new ways for users to express ideas and receive responses that feel more intuitive and human-like,” says Shaham. “This could significantly enrich interactions, making AI more accessible and versatile.”

While SketchAgent’s drawing prowess is promising, it can’t make professional sketches yet. It renders simple representations of concepts using stick figures and doodles, but struggles to doodle things like logos, sentences, complex creatures like unicorns and cows, and specific human figures.

At times, their model also misunderstood users’ intentions in collaborative drawings, like when SketchAgent drew a bunny with two heads. According to Vinker, this may be because the model breaks down each task into smaller steps (also called “Chain of Thought” reasoning).

When working with humans, the model creates a drawing plan, potentially misinterpreting which part of that outline a human is contributing to. The researchers could possibly refine these drawing skills by training on synthetic data from diffusion models.

Additionally, SketchAgent often requires a few rounds of prompting to generate human-like doodles. In the future, the team aims to make it easier to interact and sketch with multimodal language models, including refining their interface.

Still, the tool suggests AI could draw diverse concepts the way humans do, with step-by-step human-AI collaboration that results in more aligned final designs.

More information:

Yael Vinker et al, SketchAgent: Language-Driven Sequential Sketch Generation, arXiv (2024). DOI: 10.48550/arxiv.2411.17673

arXiv

Massachusetts Institute of Technology

Citation:

Teaching AI models the broad strokes to sketch more like humans do (2025, June 3)

retrieved 3 June 2025

from https://techxplore.com/news/2025-06-ai-broad-humans.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.