The latest generative AI models such as OpenAI’s ChatGPT-4 and Google’s Gemini 2.5 require not only high memory bandwidth but also large memory capacity. This is why generative AI cloud operating companies like Microsoft and Google purchase hundreds of thousands of NVIDIA GPUs.

As a solution to address the core challenges of building such high-performance AI infrastructure, Korean researchers have succeeded in developing an NPU (neural processing unit) core technology that improves the inference performance of generative AI models by an average of more than 60% while consuming approximately 44% less power compared to the latest GPUs.

Professor Jongse Park’s research team from KAIST School of Computing, in collaboration with HyperAccel Inc., developed a high-performance, low-power NPU core technology specialized for generative AI clouds like ChatGPT.

The technology proposed by the research team was presented by Ph.D. student Minsu Kim and Dr. Seongmin Hong from HyperAccel Inc. as co-first authors at the 2025 International Symposium on Computer Architecture (ISCA 2025), held in Tokyo, June 21–25.

The key objective of this research is to improve the performance of large-scale generative AI services by light-weighting the inference process, while minimizing accuracy loss and solving memory bottleneck issues. This research is highly recognized for its integrated design of AI semiconductors and AI system software, which are key components of AI infrastructure.

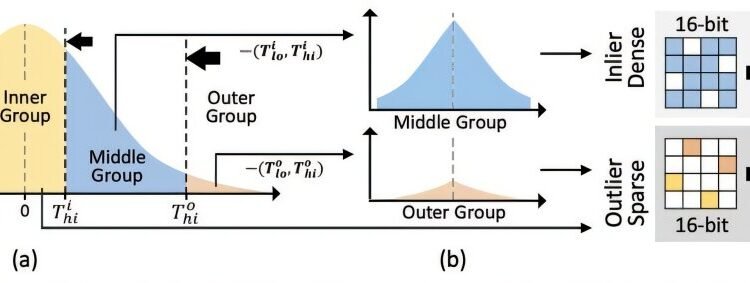

While existing GPU-based AI infrastructure requires multiple GPU devices to meet high bandwidth and capacity demands, this technology enables the configuration of the same level of AI infrastructure using fewer NPU devices through KV cache quantization. KV cache accounts for most of the memory usage, thereby its quantization significantly reduces the cost of building generative AI clouds.

The research team designed it to be integrated with memory interfaces without changing the operational logic of existing NPU architectures. This hardware architecture not only implements the proposed quantization algorithm but also adopts page-level memory management techniques for efficient utilization of limited memory bandwidth and capacity, and introduces new encoding techniques optimized for quantized KV cache.

Furthermore, when building an NPU-based AI cloud with superior cost and power efficiency compared to the latest GPUs, the high-performance, low-power nature of NPUs is expected to significantly reduce operating costs.

Professor Jongse Park said, “This research, through joint work with HyperAccel Inc., found a solution in generative AI inference light-weighting algorithms and succeeded in developing a core NPU technology that can solve the memory problem. Through this technology, we implemented an NPU with over 60% improved performance compared to the latest GPUs by combining quantization techniques that reduce memory requirements while maintaining inference accuracy, and hardware designs optimized for this.

“This technology has demonstrated the possibility of implementing high-performance, low-power infrastructure specialized for generative AI, and is expected to play a key role not only in AI cloud data centers but also in the AI transformation (AX) environment represented by dynamic, executable AI such as agentic AI.”

More information:

Minsu Kim et al, Oaken: Fast and Efficient LLM Serving with Online-Offline Hybrid KV Cache Quantization, Proceedings of the 52nd Annual International Symposium on Computer Architecture (2025). DOI: 10.1145/3695053.3731019

The Korea Advanced Institute of Science and Technology (KAIST)

Citation:

AI cloud infrastructure gets faster and greener: NPU core improves inference performance by over 60% (2025, July 7)

retrieved 7 July 2025

from https://techxplore.com/news/2025-07-ai-cloud-infrastructure-faster-greener.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.